Small Language Models: Privacy, Cost and GDPR Compliance for European Businesses

Small Language Models: Privacy, Cost and GDPR Compliance for European Businesses A few months ago, an Italian manufacturing company asked me to review their AI setup. They had integrated a major US-based LLM API into ...

Small Language Models: Privacy, Cost and GDPR Compliance for European Businesses

A few months ago, an Italian manufacturing company asked me to review their AI setup. They had integrated a major US-based LLM API into their document processing workflow — invoices, purchase orders, supplier contracts. The system worked well. Then their DPO asked a simple question: "Where does that data go when we send it to the API?"

The answer involved Standard Contractual Clauses, a US company's sub-processors list, EU-US Data Privacy Framework adequacy decisions, and ultimately a legal opinion that cost more than the API subscription. They weren't doing anything wrong — but the compliance overhead was real, ongoing, and fragile.

This is the problem small language models solve. Not just for cost reasons, but for structural ones that matter specifically if you operate under GDPR or are working through your EU AI Act compliance obligations.

What "small" actually means — and what it doesn't

The term is relative and shifting, but in practice a small language model (SLM) today means anything from 1 billion to roughly 22 billion parameters. For context, GPT-4 class models are estimated at several hundred billion parameters. The key difference isn't intelligence — it's specialisation and resource requirements.

A frontier model like Claude or GPT-4 is trained to handle an enormous range of tasks: creative writing, complex reasoning, multilingual conversation, code generation, data analysis. That breadth requires massive infrastructure to run and, critically, requires sending your data to someone else's servers.

A well-chosen SLM trained or fine-tuned for a narrower task — classifying support tickets, extracting fields from invoices, answering questions based on your documentation, summarising meeting notes — can match or exceed the larger model's accuracy on that specific task, while running on hardware you already own.

The specialist analogy holds here. You wouldn't send a GP to perform surgery. But you also wouldn't call a neurosurgeon to prescribe antibiotics. The question isn't "which model is best" — it's "which model is right for this task."

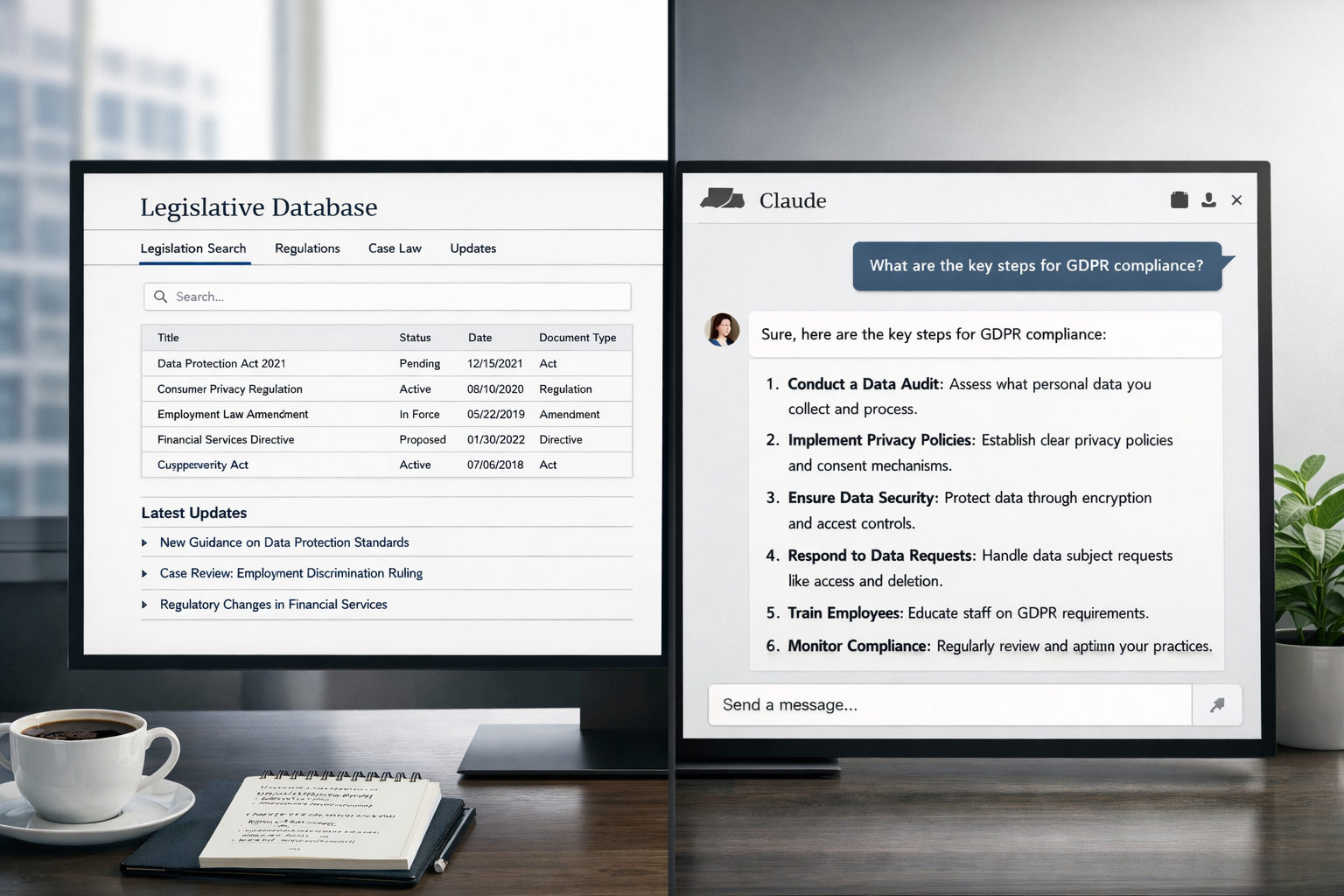

The GDPR angle: why on-premises deployment changes the compliance calculus

Most European businesses using AI APIs today are doing so under GDPR Article 46 mechanisms — typically Standard Contractual Clauses (SCCs). These are valid, but they carry compliance overhead: data transfer impact assessments, vendor audits, sub-processor management, and ongoing monitoring as the legal landscape shifts.

The Schrems II ruling in 2020 invalidated the previous Privacy Shield framework and established that SCCs alone may not be sufficient when the recipient country's surveillance laws conflict with EU data protection standards. The EU-US Data Privacy Framework (2023) partially resolved this for certified US companies, but it remains subject to legal challenge — the next Schrems ruling is a when, not an if.

If the model runs on your infrastructure — your server, your cloud region in Frankfurt or Milan, your employee's laptop — there is no international data transfer. The GDPR question becomes substantially simpler. You still have obligations around data minimisation, access controls, and retention policies, but you've eliminated an entire compliance surface.

For sectors that handle sensitive data — healthcare, legal, financial services, HR — this isn't a minor detail. Italy's Garante per la Protezione dei Dati Personali has been one of the more active EU data protection authorities, with enforcement actions against ChatGPT (2023), Replika, and several analytics providers. The direction of travel is clear.

The AI Act adds another layer

The EU AI Act entered into force in August 2024. The prohibitions on unacceptable-risk systems applied from February 2025. Rules for General Purpose AI (GPAI) providers — the large foundation model companies — applied from August 2025. The obligations for high-risk AI systems apply from August 2026.

Where SLMs become strategically interesting is in the risk classification logic. A narrow, task-specific model that you deploy internally for document classification is much easier to position as limited-risk than a general-purpose AI system connected to external APIs processing personal data. The Act's requirements scale with risk — lower risk means lighter obligations, fewer conformity assessments, less documentation burden.

This doesn't mean SLMs are automatically AI Act-compliant. If your SLM makes decisions with significant legal or similarly significant effects on individuals, risk classification applies regardless of model size. But for the broad category of internal productivity use cases — document processing, knowledge retrieval, summarisation — local SLM deployment typically keeps you in a simpler compliance tier.

How local deployment actually works in 2026

The tooling has improved dramatically. Running a capable open-weight model locally is no longer an infrastructure project — it's closer to a weekend experiment.

Ollama is the most practical starting point. It's an open-source tool that handles model downloading, quantization format management, and inference serving behind a local API. You install it on macOS, Linux or Windows, run ollama pull qwen2.5:7b, and within minutes you have a local endpoint at localhost:11434 that accepts standard chat API requests. Any application built for the OpenAI API can be pointed at Ollama with a one-line URL change.

Hardware requirements are more approachable than most people expect. A quantized 7B parameter model (in Q4 format, which reduces memory footprint by roughly 4x with modest quality trade-offs) runs on a machine with 8GB of unified memory — a current MacBook Air qualifies. A 14B model needs 16GB. For a dedicated on-premises server, a €1,500–2,500 mini-PC with a modern CPU handles most document processing workloads at reasonable throughput. GPU acceleration significantly increases speed but isn't required for most enterprise text tasks.

The models worth knowing about as of early 2026:

| Model | Parameters | Strengths | Minimum RAM (Q4) |

|---|---|---|---|

| Qwen 2.5 (Alibaba) | 0.5B – 72B | Strong multilingual performance, good at structured output and Italian text | 4GB (7B variant) |

| Phi-4 (Microsoft) | 14B | Reasoning and instruction-following above its weight class; solid for document analysis | 10GB |

| Gemma 2 (Google) | 2B, 9B, 27B | Efficient inference, strong safety tuning, good for regulated environments | 2GB (2B variant) |

| Mistral Small 3 | 22B | Multilingual, strong at classification and extraction, Apache 2.0 license | 14GB |

| LLaMA 3.2 (Meta) | 1B, 3B, 11B (vision) | Smallest variants for edge/mobile; vision model handles document images | 1GB (1B variant) |

Multilingual support matters if you're processing Italian documents. Qwen 2.5 and Mistral Small 3 have notably better Italian comprehension than earlier open-weight models, which were heavily English-skewed. This is a practical consideration for any Italian business: a model that struggles with Italian syntax will produce worse extractions regardless of its parameter count.

Where SLMs genuinely win — and where they don't

The honest version of this comparison is that SLMs are not a drop-in replacement for frontier models across all tasks. They are a better fit for specific task categories, and understanding where the boundaries are will save you a failed pilot.

SLMs handle well: document field extraction (invoice data, contract clauses, form responses), text classification (ticket routing, intent detection, sentiment analysis), structured output generation (JSON from unstructured text), RAG-based Q&A on a defined document corpus, summarisation of routine documents.

SLMs struggle with: complex multi-step reasoning chains, tasks requiring broad world knowledge not in the training data, very long context windows (most SLMs cap at 8K–32K tokens vs. 200K+ for frontier models), nuanced creative writing, and novel coding tasks from scratch.

The workflow we typically follow when evaluating whether an SLM fits a use case: take 50–100 representative examples from the actual task, run them through both the SLM candidate and a frontier model, compare outputs manually on a random 20-sample subset, then decide. If the SLM's accuracy on real data is within acceptable margins, local deployment wins on every other dimension — cost, latency, privacy, compliance. If it isn't, we either fine-tune on domain data or accept that this particular task warrants the larger model.

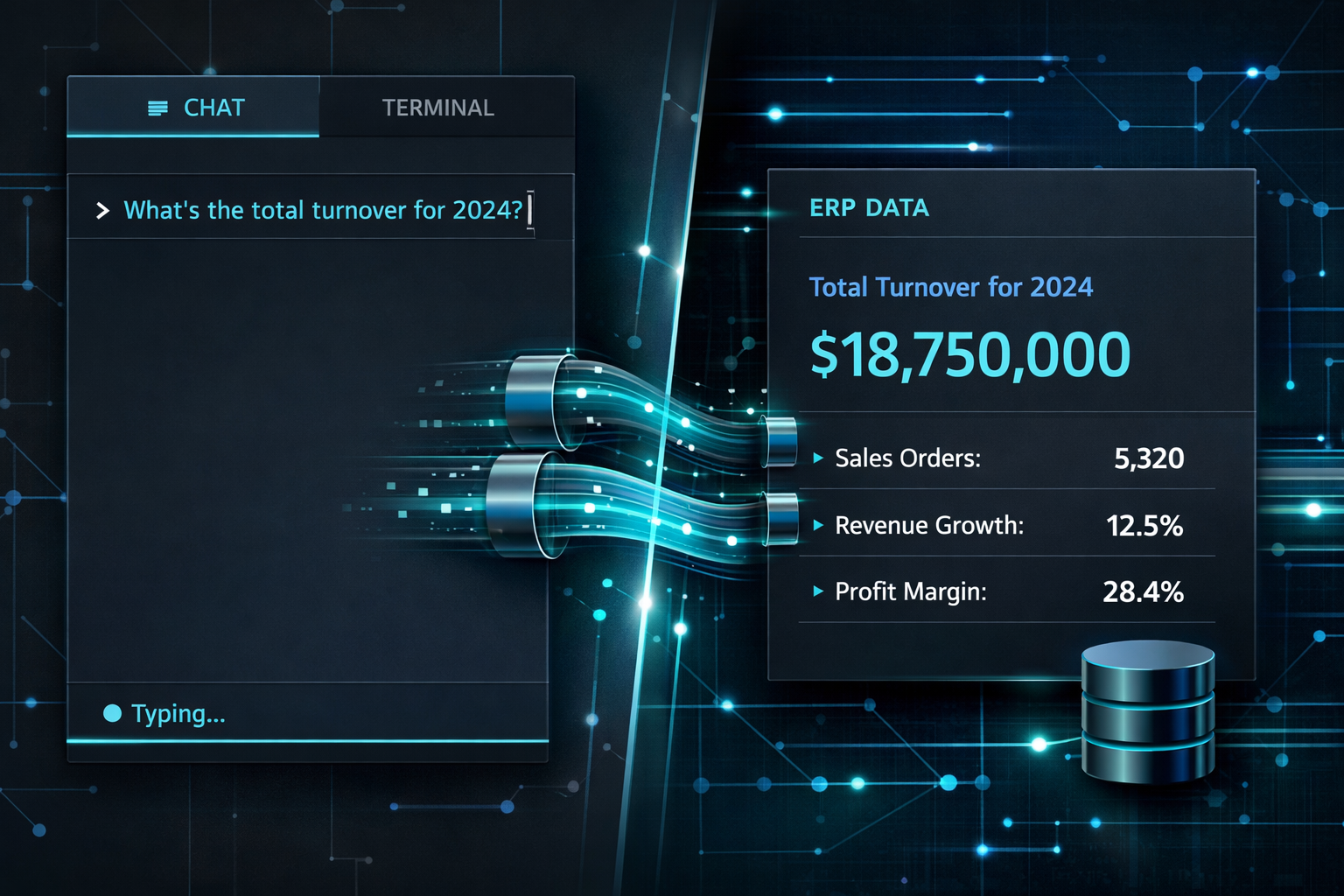

A concrete example: fatture elettroniche and the Italian accountant

One implementation pattern that recurs in the Italian SME context: processing fatture elettroniche (electronic invoices in the SDI XML format) to extract structured data for ERP ingestion. The task is well-defined — parse supplier VAT number, line items, IVA codes, payment terms — and the documents follow a known schema. It's exactly the kind of narrow, structured extraction task where a fine-tuned or instruction-tuned SLM outperforms a larger generalist.

Running Qwen 2.5 7B locally via Ollama on a standard server, with a prompt engineered around the SDI schema, achieves extraction accuracy comparable to a frontier API call — without the data leaving the premises, without per-call costs at scale, and without legal exposure when the invoices contain commercially sensitive supplier terms. The setup time is measured in days, not months.

This is the practical argument for SLMs in the Italian market: not that they're smarter than GPT-4, but that for a well-scoped task, they're accurate enough, they're local, and they're legally simpler.

The trade-offs nobody mentions upfront

Running your own models means owning your own problems. A few things to factor in before committing:

Model updates are your responsibility. When a new version of Qwen or Mistral ships, you decide whether and when to update. You run your own regression tests. For many businesses, this is a manageable overhead. For others, the managed API model is simpler.

Fine-tuning may be necessary for domain-specific accuracy. Off-the-shelf models are trained on general text. If your use case involves heavily domain-specific language — legal Italian, specific industry terminology, proprietary product codes — base model performance may be below acceptable thresholds until you fine-tune on representative examples.

Context window limits bite on long documents. If you need to analyse a 200-page contract as a single unit, most SLMs will force you into chunking strategies with RAG architecture. This is solvable, but adds implementation complexity compared to a frontier model that can ingest the whole document.

Hallucination rates are higher out-of-domain. SLMs trained for specific tasks perform well in-domain and poorly outside it. If your application needs to handle unpredictable inputs, build robust output validation into the pipeline.

The decision framework

The right question isn't "should we use small language models?" It's "which tasks in our workflow have a cost, privacy, or compliance reason to run locally, and is the accuracy acceptable?" For many European businesses, the answer is: more tasks than you'd initially expect.

Start with the use cases where data sensitivity is highest and task scope is narrowest. That's where SLMs deliver the most value with the least risk. Expand from there based on measured accuracy, not assumptions.

Evaluating a local AI deployment for your business?

We help European businesses identify which AI use cases are suited to on-premises SLM deployment versus managed API — and build the proof of concept to validate the accuracy before committing infrastructure. If you're working through GDPR or AI Act compliance questions alongside this, we can scope both together.

Let's talk about your use case →

Drawing from over 20 years of expertise as Fractional innovation Manager, I love bridging diverse knowledge areas while fostering seamless collaboration among internal departments, external agencies, and providers. My approach is characterized by a collaborative and engaging management style, strong negotiation skills, and a clear vision to preemptively address operational risks.

No guesswork.

No slide decks.

Just impact.

Ready to move from AI hype to a working system? In a free 30-minute call we'll identify your highest-impact use case and tell you exactly what it takes to get there.

No upfront cost · Italy · Malta · Europe · English & Italian

If you haven't read our intro on MCP Servers yet, start there — it covers the full picture of where Agentic AI delivers the highest ROI, ...

The complete implementation guide: building the ADK agent, handling tool calls, and what happens when an LLM meets real ERP complexity.

If you run a HubSpot site in more than one language, you already know the routine. Open the source post. Copy the content. Paste it into a ...